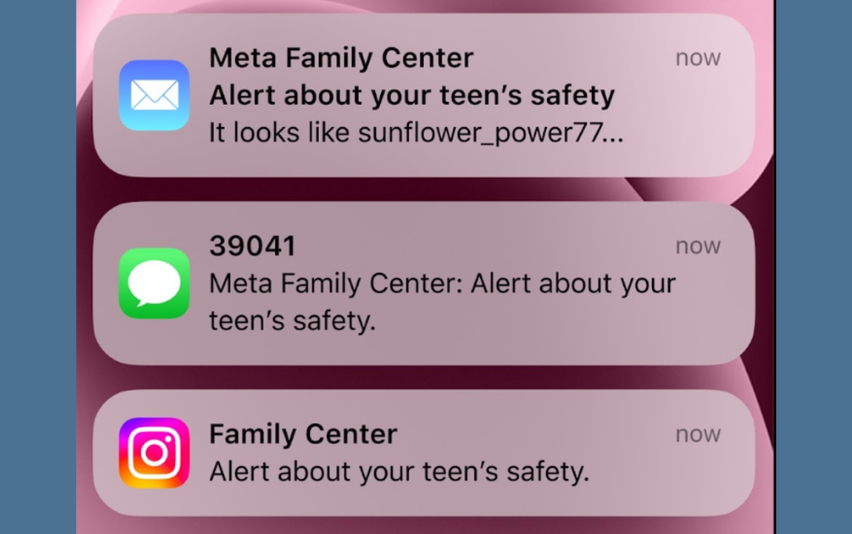

Online safety for younger users is becoming a major priority across social platforms. In a new safety initiative, Instagram is testing a feature that alerts parents when teenagers search for content related to self-harm or sensitive mental health topics.

The update reflects growing industry efforts to protect minors online and provide guardians with greater visibility into potentially harmful digital behavior. As concerns rise about social media’s impact on youth mental health, platforms are expanding parental oversight tools.

Why Teen Safety Features Are Expanding

Young users spend significant time on social platforms, making digital safety a critical responsibility. The development of Instagram teen safety features reflects increasing pressure on platforms to mitigate mental health risks and harmful exposure.

Key concerns driving safety tools include:

- Exposure to harmful content

- Vulnerable mental health searches

- Online influence risks

- Peer pressure dynamics

- Algorithm exposure patterns

Safety systems aim to reduce these risks.

How Instagram’s Parental Alerts Work

The feature notifies parents when a teen account searches for or engages with content related to self-harm themes. Alerts provide guardians an opportunity to intervene, support, or discuss concerns.

The expansion of parental control social media tools indicates a shift toward collaborative safety between platforms and families.

Alert mechanisms may include:

- Search behavior notifications

- Content category flags

- Activity summaries

- Safety guidance prompts

- Parent dashboard insights

Such tools enhance awareness without public exposure.

Balancing Privacy and Protection

Teen safety features must balance parental oversight with young users’ privacy rights. Platforms aim to provide protective alerts while avoiding invasive monitoring or stigma.

The rise of online safety for teens frameworks highlights this delicate balance.

Considerations include:

- Confidentiality safeguards

- Limited data sharing

- Contextual alerts

- Educational messaging

- Support resources

Responsible design supports trust.

Social Media and Youth Mental Health

Research and public debate increasingly examine how social media exposure affects adolescent well-being. Platforms are responding by integrating safety interventions into user experience.

Protective features include:

- Content warnings

- Help resources

- Crisis support links

- Algorithm adjustments

- Guardian tools

Safety ecosystems extend beyond moderation.

Why Platforms Are Adding Guardian Tools

Parents often seek visibility into children’s online activity without excessive surveillance. Guardian tools provide insight while encouraging open communication.

Benefits include:

- Early risk awareness

- Support opportunities

- Digital literacy

- Family dialogue

- Prevention strategies

Collaboration improves outcomes.

Industry Trend Toward Youth Protection

Technology companies globally are expanding youth safety features in response to regulatory and societal pressure. Parental alerts represent one of many protective measures emerging across platforms.

Trends include:

- Age-specific controls

- Safety notifications

- Content filtering

- Screen-time tools

- Mental health resources

Child safety is becoming core platform policy.

Why This Update Matters

Instagram’s parental alert feature reflects a broader shift toward proactive digital safety rather than reactive moderation. By identifying concerning search patterns early, platforms enable supportive intervention before harm occurs.

As social media becomes deeply embedded in youth life, protective design will shape platform responsibility and public trust.

Safety is becoming a central pillar of social platform evolution.

Frequently Asked Questions

What is Instagram teen safety alert feature?

The feature notifies parents when teens search for or engage with self-harm related content, helping guardians monitor and support online well-being.

How do parental control social media tools work?

Parental control social media tools provide guardians with insights into teen activity, alerts about risks, and safety management options.

Why is online safety for teens important?

Online safety for teens helps protect young users from harmful content, mental health risks, and negative online influences.

Will parents see all teen searches?

Alerts focus on sensitive safety-related searches rather than general activity, maintaining privacy while providing protection.

Are teen safety features expanding?

Yes. Platforms increasingly add youth protection tools in response to safety concerns and regulatory expectations.

Instagram’s introduction of parental alerts for teen self-harm related searches highlights the growing emphasis on youth protection in digital environments. By enabling guardians to identify potential risks early, the platform strengthens preventive safety mechanisms rather than relying solely on content moderation.

As social media continues shaping adolescent experiences, proactive safety features will play a crucial role in safeguarding mental well-being. The evolution of teen safety tools reflects a broader transformation in platform responsibility — from engagement maximization to user protection.

In the future of social media, safety will be as important as connectivity.