Artificial intelligence is transforming how content is created and shared across social platforms. To address growing concerns around misinformation and authenticity, X is testing labels that identify posts generated or heavily assisted by AI.

The initiative reflects a broader industry push toward transparency in digital content. As generative AI tools become more accessible, distinguishing human-created content from machine-generated material is becoming increasingly important for user trust and platform integrity.

Why AI Content Transparency Matters

The rapid rise of generative AI has blurred the boundary between human and machine-created content. Without clear disclosure, users may struggle to assess authenticity or credibility. The introduction of AI generated content labels aims to provide context about how posts are created.

Transparency mechanisms help platforms address:

- Misinformation risks

- Synthetic media spread

- Content authenticity doubts

- Manipulation concerns

- User trust erosion

Clear labeling supports informed consumption.

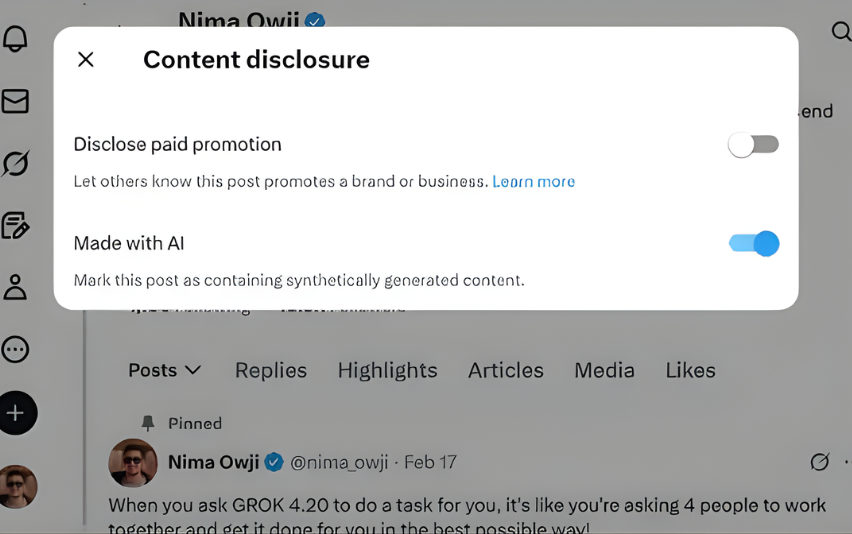

How AI Labels May Work on X

AI content labels typically appear alongside posts identified as AI-generated or AI-assisted. Detection may rely on creator disclosure, automated analysis, or metadata signals.

The evolution of AI content detection tools across platforms shows increasing investment in identifying synthetic media and machine-generated text.

Potential labeling approaches include:

- “AI-generated” tags

- Disclosure badges

- Context notes

- Content origin markers

- Creation method indicators

Such features improve transparency without removing content.

The Growing Need for Social Media AI Disclosure

As generative AI becomes widely accessible, platforms face pressure to ensure users understand content origins. The expansion of social media AI policy frameworks reflects global concern over deepfakes, automated narratives, and synthetic misinformation.

Disclosure policies aim to:

- Protect information integrity

- Reduce deception risks

- Support media literacy

- Maintain platform credibility

- Meet regulatory expectations

Transparency is becoming a core platform responsibility.

Benefits of AI Labels for Users and Platforms

AI-generated content labels provide value across multiple dimensions of digital communication.

For users:

- Clear content origin

- Better credibility assessment

- Reduced misinformation exposure

- Informed engagement

- Media awareness

For platforms:

- Trust reinforcement

- Regulatory alignment

- Safety compliance

- Content accountability

- Reputation protection

Labeling strengthens ecosystem trust.

AI Transparency Trends Across Social Platforms

Major technology companies are introducing disclosure tools for synthetic media. Labels, watermarks, and provenance systems are emerging as standard features across digital platforms.

Industry trends include:

- AI content tagging

- Synthetic media detection

- Provenance metadata

- Creator disclosure tools

- Authenticity verification

X’s testing aligns with this global movement.

Challenges in Detecting AI-Generated Content

Identifying AI-created material remains technically complex. Advanced generative models produce text and media that closely mimic human expression.

Key challenges include:

- Detection accuracy

- False positives

- Creator disclosure compliance

- Evolving AI models

- Cross-platform consistency

Platforms must balance accuracy with fairness.

Why This Update Matters

AI content labels represent an important step toward transparency in digital communication. As generative AI reshapes online discourse, disclosure tools help preserve authenticity and trust.

Social platforms are transitioning from open content hosts to curated information environments. Transparency features will define credibility in the AI era.

Understanding content origin is becoming essential to digital literacy.

Frequently Asked Questions

What are AI generated content labels?

AI generated content labels identify posts created or significantly assisted by artificial intelligence. They provide transparency about content origin and creation methods.

Why is AI content detection important?

AI content detection helps platforms recognize machine-generated material, reducing misinformation risks and improving authenticity awareness among users.

What is social media AI policy?

Social media AI policy refers to platform rules governing AI-generated content, disclosure requirements, and transparency standards for synthetic media.

Will AI labels remove content?

No. Labels typically provide context rather than removing posts. They inform users about content origin while allowing expression.

Will all platforms add AI labels?

Many social platforms are developing AI disclosure tools. As generative AI grows, labeling is likely to become a standard feature across digital platforms.

X’s testing of AI-generated content labels highlights the growing importance of transparency in the age of generative media. As artificial intelligence increasingly shapes online expression, distinguishing machine-created content from human communication becomes essential for trust and credibility.

Disclosure tools like AI labels represent the next stage in platform governance — balancing innovation with accountability. In the evolving digital ecosystem, transparency is emerging as the foundation of authenticity.

Because in the AI era, understanding who — or what — created content matters as much as the content itself.